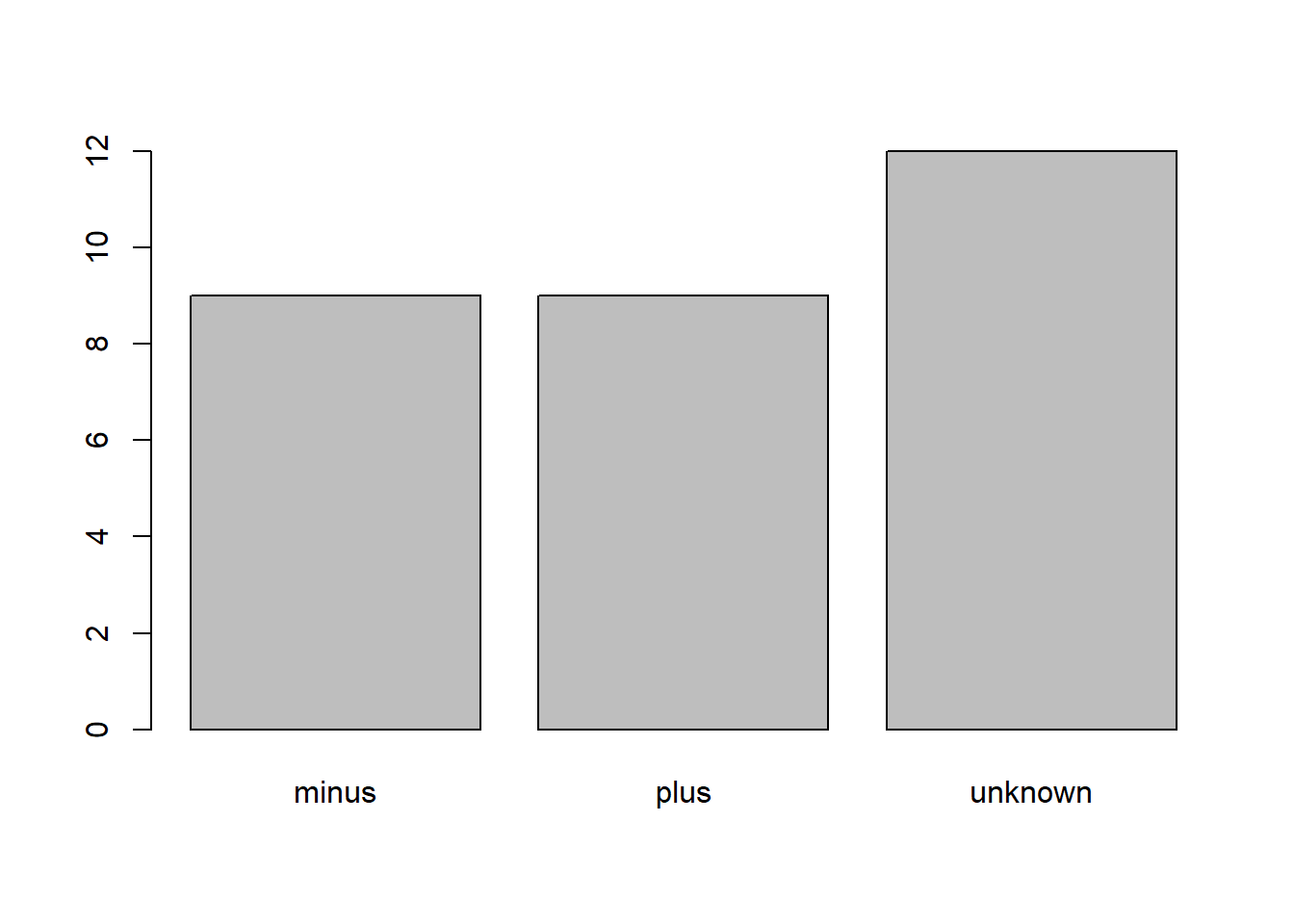

Is it a problem that the histogram only has 3 steps? It looks like information is preserved from our point of view. Here's the the histogram looks like for 10k rows: RANGE_HI_KEY RANGE_ROWS EQ_ROWS DISTINCT_RANGE_ROWS AVG_RANGE_ROWS SELECT 'X_STATS_SEQ_INT_FULL', * rows_sampled, stepsįROM this data the number of histogram steps quickly increases to 200 (it first hits the max number of steps with 397 rows), stays at 199 or 200 until 1485 rows are in the table, then slowly decreases until the histogram only has 3 or 4 steps. INSERT INTO X_STATS_RESULTS WITH (TABLOCK) SELECT * - 1) + N FROM STATISTICS X_SEQ_NUM X_STATS_SEQ_INT_FULL WITH FULLSCAN - can comment out FULLSCAN as needed

WHERE OBJECT_ID = name = 'X_STATS_SEQ_INT_FULL' Let's start with just inserting 1 row at a time up to 10000. Below is some test code that I wrote to insert sequential integers into a table, gather stats after each insert, and save information about the stats into a results table. Let's run a few tests on simple data to see how the stats change over time. I've never observed this in practice but I don't see why it wouldn't be possible. What this means is that you could get a different number of histogram steps when updating stats (without FULLSCAN) on the same data. here SQL Server samples around 66.6% of the data from the table to create the histogram. TABLESAMPLE is documented but StatMan and step_direction are not. TABLESAMPLE SYSTEM (6.666667e+001 PERCENT) WITH (READUNCOMMITTED) SELECT TOP 100 PERCENT, step_direction() over (order by NULL) AS įROM. Here's what I got for the same table with 1M rows: - after gathering stats with 1 M rows in table If a table is too big then the automatic sample size falls below 100%. SQL Server picks the automatic sample size based on the size of the table (I think that it's both number of rows and pages in the table). after gathering stats with 1 row in tableįROM. You can see this when the table is very small or you gather stats with the FULLSCAN option: CREATE TABLE X_SHOW_ME_STATMAN (N INT) ĬREATE STATISTICS X_STAT_X_SHOW_ME_STATMAN ON X_SHOW_ME_STATMAN (N) The first one just grabs all of the data from the table without any filtering. For simple statistics objects there are at least two different types of StatMan queries (there are different queries for quick stat updates and I suspect that the incremental stats feature on partitioned tables also uses a different query). There is a function called StatMan within SQL Server that is involved with creating the histograms. You can view these queries with extended events. When SQL Server determines that a statistics update is needed it kicks off a hidden query that reads either all of a table's data or a sample of the table's data. For multi column statistics the histogram is only created on the leading column.

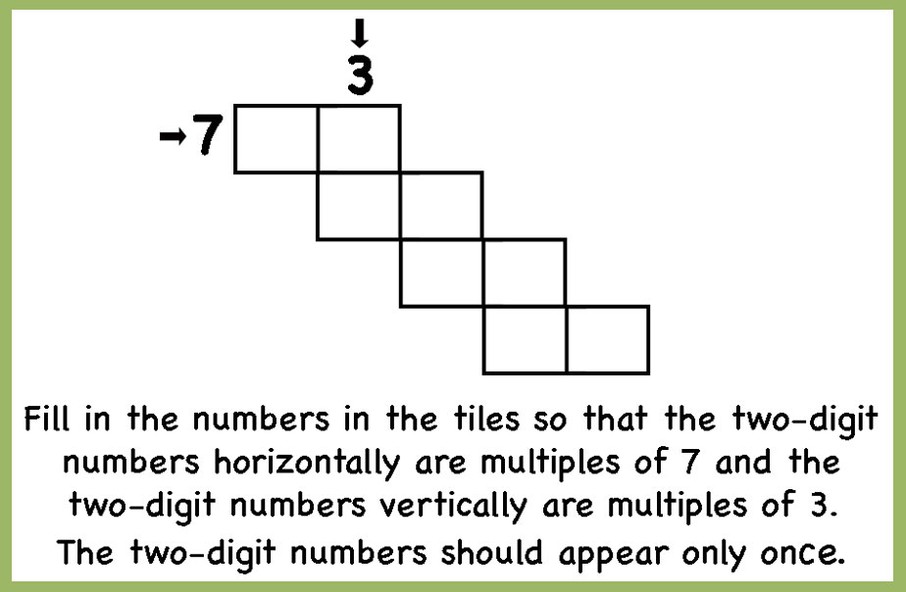

I'm going to limit this post to discussing single column statistics because it'll already be pretty lengthy and you're interested in how SQL Server buckets the data into histogram steps. Now the histogram steps are reduced to 3 steps +-+-+-+-+-+Ĭan someone tell me how these steps are decided? Now the histogram steps are reduced to 4 steps +-+-+-+-+-+ +-+-+-+-+-+Īs we can see there are 53 steps in the histogram.Īgain inserting few thousand records INSERT INTO histogram_step | RANGE_HI_KEY | RANGE_ROWS | EQ_ROWS | DISTINCT_RANGE_ROWS | AVG_RANGE_ROWS | Updating and checking the statistics UPDATE STATISTICS histogram_step WITH fullscanĭBCC show_statistics('histogram_step', pk_histogram_step) Inserting 100 records into my table INSERT INTO histogram_step Schema definition CREATE TABLE histogram_stepĬONSTRAINT pk_histogram_step PRIMARY KEY (id) Why is it restricted to 200 steps even though my key column has more than 200 distinct values? Is there any deciding factor? How are the number of histogram steps decided in Statistics in SQL Server?

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed